The Legal Profession Is Using AI. It Hasn't Integrated It Yet.

There's a difference between having AI tools and having an AI-capable practice. Most legal organizations are still on the wrong side of that line.

The data points are hard to ignore. Goldman Sachs estimated that 44% of legal tasks have automation potential. Self-represented parties in AAA arbitration proceedings increased by more than 50% in Q1 2026 compared to the same period last year. These aren't fringe statistics. They describe a profession mid-shift — one where the analytical infrastructure that used to require a full litigation team is increasingly accessible to anyone willing to learn how to use it.

The legal profession's response has been largely tool-focused: which AI platforms are safe, which ones comply with confidentiality requirements, which ones have been blessed by bar guidance. That's the right question for one specific problem — citation verification. It's the wrong question for the larger transformation happening right now.

The Overcorrection That Created a New Vulnerability

When attorney Steven Schwartz filed a brief in 2023 citing cases that didn't exist — generated by ChatGPT and unverified — the profession took the right lesson and drew the wrong conclusion.

The right lesson: AI-generated legal citations must be independently verified. General consumer AI tools are prediction engines, not legal databases. They generate statistically likely output, not retrieved authority. That distinction is foundational, and Schwartz's failure to understand it cost him.

The wrong conclusion: general AI is the problem, and legal-specific platforms are the solution.

That framing produced an overcorrection that has been quietly widening the gap between law firms and the self-represented parties now appearing in their proceedings. A pro se litigant is not bound by institutional AI policy, bar guidance, or malpractice exposure. They don't need approval to upload their documents, build a knowledge base across their full case record, and ask a general AI tool to identify patterns, surface contradictions, and construct arguments.

They simply do it.

Meanwhile, the attorney who restricted themselves to verified citation platforms — and stopped there — has solved the citation problem and built a growth ceiling. Legal-specific platforms are extraordinarily precise at what they were designed to do: retrieval and verification. They are not strategic reasoning tools. They answer discrete questions well. They don't build and maintain a cumulative understanding of an evolving case record.

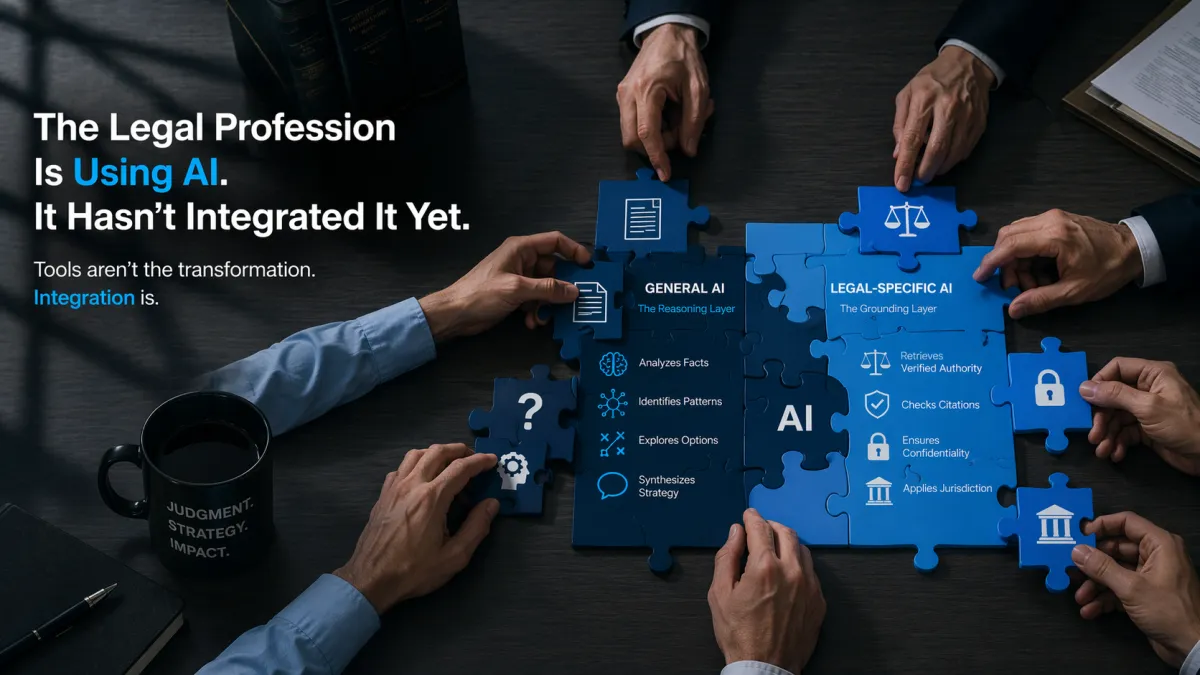

Two Categories. Two Functions. Neither Is Sufficient Alone.

General AI tools — Claude, ChatGPT, NotebookLM and others — are prediction engines. That description is often used dismissively. It shouldn't be.

A senior attorney reviewing a complaint doesn't simply retrieve facts. She predicts how a judge will read the allegations. She infers the opposing party's strategy. She constructs a rhetorical framework that makes her client's position coherent. These are cognitive acts of prediction, inference, and construction — exactly what general AI performs at a level that is genuinely useful.

General AI can surface patterns across a large document set that no human reviewer would catch in real time. It can identify contradictions between a party's current position and prior communications. It can construct alternative theories, stress-test arguments, and generate first-draft responsive language that is strategically coherent. None of this requires verified case law.

Legal-specific platforms — CoCounsel, Lexis Protege, Westlaw AI, Vincent AI, Harvey AI — are built around retrieval-augmented generation: they search verified legal databases, retrieve actual authority, and ground their outputs in what was retrieved rather than predicted. That architecture prevents fabrication. It also addresses Rule 1.6 confidentiality requirements at the design level, with zero-retention architecture and enterprise-grade security that general consumer tools don't offer by default.

These are not competing categories. They address different cognitive functions. General AI handles the reasoning layer. Legal-specific AI handles the grounding layer. A practice that uses only one half of that capability has solved the wrong problem, or solved only part of the right one.

The Real Divide Isn't About Tools

AI didn't create a new legal skill. It exposed an old one.

If a task can be completed entirely by AI, that task was never measuring legal judgment. It was measuring process execution. Pulling case law, summarizing opinions, drafting memos, organizing arguments — these were always preparatory steps. AI made them faster and more accessible. The ceiling of legal value — interpretation, strategy, risk assessment, judgment under incomplete information — was never there. That's still human. And in an environment where the floor has dropped dramatically, the ceiling is where the profession's value is now measured.

What changes is the scaffold. AI reasoning across a full matter record surfaces the questions. Legal-specific retrieval answers them with verified authority. The lawyer synthesizes, decides, and produces work product that reflects the full analytical capacity of the tools and the irreplaceable judgment of the professional who signs it.

That's not three separate tasks. It's one integrated workflow. Most legal organizations haven't built it.

The Diagnostic

Three questions reveal where a legal organization actually is — not where it believes it is.

How is the matter organized, right now? Not how it should be — how it actually is. Are documents centralized and accessible, or scattered across inboxes, shared drives, and the memory of whoever is closest to the file? AI reasoning is only as strong as the record it's reasoning from. A fragmented matter produces fragmented conclusions.

Where does the intelligence of the case live? In many offices, the honest answer is: in one attorney's memory, in email chains nobody has organized, in undocumented strategic assumptions. In an integrated workflow, the knowledge base compounds over time — new documents, new developments, and new decisions added to an organized, accessible record. Without that structure, every session starts over.

How does work actually move from information to output? Are general AI and legal-specific platforms being used in coordination, with structured handoffs between reasoning and retrieval — or independently, for separate tasks, with the lawyer manually bridging the gap?

The question is not whether a legal organization has AI tools. It is whether those tools are working from a shared, organized matter record — and whether the reasoning and retrieval functions compound over time rather than starting over every session.

The gap between adoption and integration is where the profession's real risk is accumulating. And it is accumulating quietly.

Education Media LLC works with legal professionals, organizations, and educators on AI-integrated workflow design and professional readiness. A new platform launches July 2026.

General inquiries

📧Email or Chat Below

📞+1 877-583-6207